What is data lineage? Techniques, best practices and tools

Organizations can bolster data governance efforts by tracking the lineage of data in their systems. Get advice on how to do so and how data lineage tools can help.

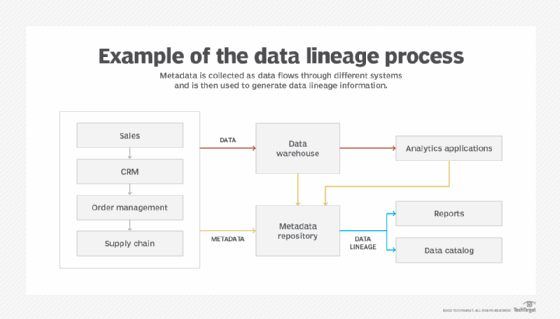

Data lineage documents the journey that data takes through an organization's IT systems, showing how it flows between them and gets transformed for different uses along the way. It uses metadata -- data about the data -- to enable both end users and data management professionals to track the history of data assets and get information about their business meaning or technical attributes.

For example, data lineage records can help data scientists, other data analysts and business users understand the data they work with and ensure that it's relevant to their information needs. Data lineage also plays a valuable role in data governance, master data management and regulatory compliance programs. Among other aspects of those initiatives, it simplifies two critical data governance procedures: analyzing the root causes of data quality issues and the impact of changes to data sets.

Data lineage information is collected from operational systems as data is processed and from the data warehouses and data lakes that store data sets for BI and analytics applications. In addition to the detailed documentation, data flow maps and diagrams can be created to provide visualized views of data lineage mapped to business processes. To simplify end-user access to lineage information, it's often incorporated into data catalogs, which inventory data assets and the metadata associated with them.

Why is data lineage important?

Information on data lineage is crucial to data management and analytics efforts. Lineage details shine a light on data to help organizations manage and use it effectively. Without access to those details, it becomes much harder to take full advantage of data's potential business value.

The following are some of the benefits that data lineage provides.

- More accurate and useful analytics. By letting analytics teams and business users know where data comes from and what it means, data lineage improves their ability to find the data they need for BI and data science uses. That leads to better analytics results and makes it more likely that data analysis work will deliver meaningful information to drive business decision-making.

- Stronger data governance. Data lineage also aids in tracking data and carrying out other key parts of the governance process. It helps data governance managers and team members make sure that data is valid, clean and consistent and that it's secured, managed and used properly.

- Tighter data security and privacy protections. Organizations can use data lineage information to identify sensitive data that requires particularly strong security. It can also be used to set different levels of user access privileges based on security and data privacy policies and to assess potential data risks as part of an enterprise risk management strategy.

- Improved regulatory compliance. Better security protections informed by data lineage can help organizations ensure that they comply with data privacy laws and other regulations. Well-documented data lineage also makes it easier to conduct internal compliance audits and report on compliance levels.

- Streamlined data management. In addition to data quality improvement, data lineage boosts a variety of other data management tasks. Examples include managing data migrations, breaking down data silos and detecting and addressing gaps in data sets.

Data lineage vs. data classification and data provenance

Data lineage is also closely aligned with data classification and data provenance, two other data management processes. Here's a look at what they are and how they differ from and relate to data lineage.

Data classification. This involves assigning data to different categories based on its characteristics, primarily for security and compliance purposes. Classification is used to categorize data based on how sensitive it is -- for example, as personal, proprietary, confidential or public information. Doing so separates data sets that need higher levels of security and more restrictive access controls from ones that don't. Data lineage provides information about data sets that can aid in classifying them.

Data provenance. Sometimes considered to be synonymous with data lineage, data provenance alternatively is seen as being more narrowly focused on the origins of data, including its source system and how it's generated. In that context, data lineage and data provenance can work hand in hand, with the latter providing high-level documentation of where data comes from and what it entails.

Data lineage and data governance

The essence of data governance is creating corporate data policies and ensuring that people comply with them. Such policies can span an array of intents, including directives on data protection, validation and usage. Data governance managers and data stewards must solicit data requirements from business users and work with members of the decision-making data governance committee to agree on common data definitions, specify data quality metrics and develop the policies and associated procedures.

It's a big challenge, though, to bridge the gap between defining data governance policies and implementing them. That's where data lineage comes in. It documents data sources and flows, enabling governance teams to monitor how data moves through systems and is modified and used. The lineage information helps them ensure that proper data security and access controls are in place and that data is stored, maintained and used in accordance with governance policies.

Data lineage can also ease specific governance-related tasks. For example, without a way to determine where data errors are introduced into systems, it's difficult for data stewards and data quality analysts to identify and fix them. That has consequences: If data flaws aren't caught, an organization may be plagued by inconsistent or inaccurate analytics results that lead to bad business decisions.

In root cause analysis of data errors, lineage records provide visibility into the sequence of processing stages a data set goes through. Quality levels can be examined at each stage to find where data errors originate. Working backward from where an error is first identified, a data steward can check whether the data conformed to expectations at earlier points or included the error then. By pinpointing the stage at which the data was compliant upon entry but flawed upon exit, workers involved in a data governance program can eliminate the error's root cause instead of just correcting the bad data.

Data lineage is useful, too, in doing impact analysis to stay on top of issues caused by changes to source data formats and structures, a common problem in today's increasingly dynamic data environments.

When data is changed, there may be unintended consequences downstream. By working forward from the point of data creation or collection, a data steward can rely on data lineage documentation to help trace data dependencies and identify processing stages that are affected by the changes. Those stages can then be reengineered to accommodate the changes and ensure that data remains consistent in different systems.

Key data lineage techniques

Various techniques can be used to collect and document data lineage information. They aren't necessarily mutually exclusive -- an organization may use more than one lineage technique, depending on its application needs and the nature of its data environment. The available methods include the following:

- Data tagging. By examining metadata, tags can be applied to data sets to help describe and characterize them for data lineage purposes. Tagging can be done manually by data stewards, other data governance team members and end users or automatically by software. For example, data lineage tools and lineage functionality built into data governance software often include automated algorithms that users can run to tag data sets.

- Pattern-based lineage. This approach looks for patterns in multiple data sets, such as similar data elements, rows and columns. Their presence indicates that data sets are related to each other and may be part of a data flow, while differences in data values or attributes are a sign that the data was transformed when it moved from one system to another. The data transformations and data flows can then be documented as part of data lineage records.

- Parsing-based lineage. In this case, data lineage tools parse data transformation logic, runtime log files, data integration workflows and other data processing code to identify and extract lineage information. Parsing offers an end-to-end approach to tracing data lineage in different systems and can be more accurate than pattern-based lineage, but it's also more complex.

Another approach is fully manual: interviewing business users, BI analysts, data scientists, data stewards, data integration developers and other workers about how data moves through systems and gets used and modified. The information that's gathered can be used to map out data flows and transformations, perhaps as a starting point for a data lineage initiative before implementing more automated techniques.

Data lineage best practices

Here are some best practices to help keep the data lineage process on track and ensure that it provides accurate and useful information about data sets.

- Involve business executives and users from the start. Data governance programs need executive support and participation to succeed, and the same applies to data lineage. Backing from senior executives is a must to get approval and funding. Business managers and workers should also be involved to ensure that data management teams fully understand how data is used in business processes and to verify that data lineage information is relevant and valid.

- Document both business and technical data lineage. Business lineage focuses at a high level on where data originated, how it flows and its business context. Technical lineage provides details about data transformations, integrations and pipelines, as well as a mix of table-, column- and query-level lineage views. Collecting both delivers useful information to business users and analytics teams on one hand and data architects, data modelers, data quality analysts and other IT professionals on the other.

- Tie data lineage to real business and IT needs. Data lineage shouldn't be an academic exercise. To produce the expected benefits, it needs to help enable better business decisions and strategies, as well as more effective data governance, improved data quality and other data management gains. Otherwise, it's likely to be a wasted investment.

- Take an enterprise-wide approach to data lineage. A data lineage process that focuses only on some data sets also won't be as beneficial as it could be. To really pay off, it should be a comprehensive effort that involves all of an organization's data, with a single metadata repository underpinning the lineage work.

- Create a data catalog with embedded data lineage info. Finding and understanding relevant data is often a big challenge for BI and analytics users. By building a data catalog, a data management team can provide them with an inventory of available data assets that also includes lineage information.

What to look for in data lineage tools

Manually collecting metadata and documenting data lineage requires a significant resource investment. It's also prone to error, which can cause big problems, especially as organizations increasingly rely on data analytics to drive business operations. As a result, it aids data governance efforts to seek out tools that manage representations of data lineage and automatically map them across the enterprise.

If you do decide to move forward on the technology evaluation process for a possible purchase, you should look for data lineage tools that can do the following:

- natively access a broad array of data sources and data products, survey the metadata they contain and collect it for data governance uses, increasingly through the use of AI and machine learning algorithms;

- aggregate the captured metadata into a centralized repository;

- infer data types and match common uses of reference data to data elements from different systems;

- provide simplified presentations of the aggregated metadata to end users and support collaborative efforts to validate the metadata descriptions;

- document end-to-end mappings of how data flows through your organization's systems.

- generate visualized representations of data lineage;

- include APIs for developers to use in building applications that can query the lineage records;

- create an inverted index to map data element names to their uses in different processing stages;

- offer a search capability to rapidly trace the flow of data from its origination point to its downstream targets; and

- enable users to monitor data flows both forward and backward.

Data lineage vendors

There's a plethora of data lineage technology options available to consider. Tools for documenting and managing data lineage are offered by several different types of vendors, including the following:

- large IT vendors that sell data management platforms, such as IBM, Informatica, Microsoft, Oracle, SAP and SAS, as well as cloud platform providers AWS and Google Cloud;

- software vendors with broad product portfolios that include data management and governance tools, such as Hitachi Vantara, OneTrust, Precisely and Quest Software;

- vendors that focus on data management and governance, such as ASG Technologies, Ataccama, Boomi, Collibra, Semarchy, Syniti and Talend;

- metadata management and data lineage specialists, such as Alex Solutions, Manta and Octopai; and

- vendors of data catalog tools, such as Alation, Atlan, Data.world and OvalEdge.

Vendors that offer self-service data preparation software for data engineers and analytics teams, such as DataRobot and Alteryx's Trifacta unit, also support data lineage capabilities, as do various vendors of BI and analytics tools for use within the applications run on them.