data mart (datamart)

What is a data mart?

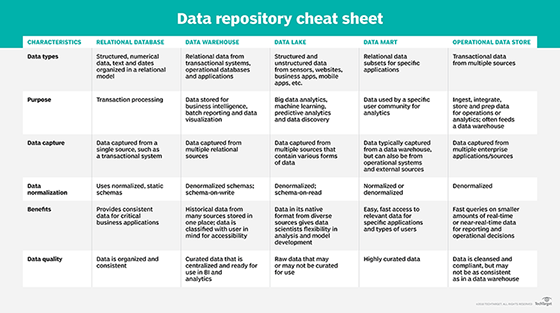

A data mart is a repository of data that is designed to serve a particular community of knowledge workers. Data marts enable users to retrieve information for single departments or subjects, improving the user response time. Because data marts catalog specific data, they often require less space than enterprise data warehouses, making them easier to search and cheaper to run.

Types of data marts

There are three basic types of data marts:

- A dependent data mart offers centralization and enables the sourcing of an organization's data from a single data warehouse. There are two methods for building a dependent data mart: one where users are able to access both the data mart and the data warehouse, and one where user access is limited only to the data mart. This latter method can produce what is commonly referred to as a data junkyard, as all data begins with a common source but is usually scrapped or junked.

- An independent data mart is built without using a central data warehouse and is ideal for smaller groups within a business or organization. Independent data marts do not have relationships with the enterprise data warehouse or with any other data mart. The data is input from an internal or external data source, and its analyses are performed autonomously. Because independent data marts do not work or interact with data warehouses, users need a consistent and centralized store of enterprise data, such as a relational database, that can be accessed by multiple users.

- A hybrid data mart combines input from data sources that are not part of the data warehouse, such as operational data, and gives users ad hoc integration. Hybrid data marts require minimal data cleansing, support large storage structures and are flexible. Hybrid data marts are well-suited for environments with multiple databases and organizations that require quick turnaround.

Data mart vs. data warehouse

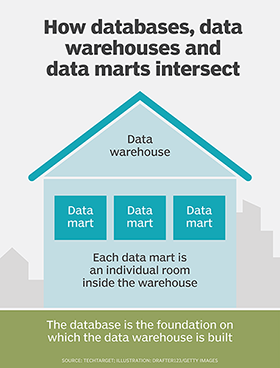

A data mart is essentially a simplified data warehouse. While data warehouses collect and manage data from different sources, data marts only focus on a single subject and only draw data from a handful of data sources. Due to their larger size, enterprise data warehouses are ideal for making strategic decisions; because data marts are much smaller, they are ideal for making tactical business decisions. Data marts are primarily used in business divisions at the department level.

Data warehouses provide an integrated environment and cohesive picture of the business. This makes the designing process fairly difficult. Because data marts are less complicated, their design process is more straightforward. Data warehouses are large, ranging in size from 100 gigabytes (GB) to one or more terabytes (TB). Data marts are much smaller, often less than 100 GB.

The implementation process for data warehouses and data marts also differs considerably. The implementation process for data warehouses can be extended from months to years, while that same process for data marts is typically restricted to just a few months.

Data mart vs. data lake

Data lakes and data marts are often confused, but they are not interchangeable terms. Data lakes consist of raw, undefined data; often, the purpose of this data has not yet been determined. Data marts hold specific data whose purpose has been clearly defined by the users. In data marts, space is never wasted, as all the data has been processed and fits a specific need; data lakes serve as a repository for unstructured, unrefined data.

Due to their size, data lakes are often more expensive than data marts, and they require more maintenance to prevent them from becoming stagnant. Because space is a prized commodity, data marts do not contain duplicate data or unused data, whereas data lakes can -- and often do -- contain redundant and unused data. For this reason, data lakes must be heavily monitored to ensure that they do not become data swamps.

Because data lakes have no set structure, they are easy to access and alter. By design, data marts are more heavily structured, and it is difficult and often costly to manipulate them. This makes data marts more secure.

Data mart vs. database

Databases often serve as the foundation for data warehouses, which, in turn, serve as the foundation for data marts. Databases can house multiple data marts, each one specializing in a different subject. Databases are referred to as operational systems because they are often used to process a company's daily transactions; these databases are maintained with special management systems.

Cloud and virtual data marts

Data virtualization software can be used to create virtual data marts, pulling data from disparate sources and combining it with other data as necessary to meet the needs of specific business users. A virtual data mart provides knowledge workers with access to the data they need, while preventing data silos and giving the organization's data management team a level of control over the organization's data throughout its lifecycle.

Using virtual data marts can prevent users from accidentally duplicating data. They can also reduce the time it takes to create data marts, thus reducing the cost.

Another approach to creating a data mart is through public cloud services. This data mart-as-a-service approach enables companies to eliminate the on-premises data infrastructure requirements of data management. It also offers the benefit of being able to quickly scale and deliver data to business users from anywhere via the web for use in business intelligence (BI) and data visualization applications.