master data management (MDM)

What is master data management (MDM)?

Master data management (MDM) is a process that creates a uniform set of data on customers, products, suppliers and other business entities across different IT systems. One of the core disciplines in the overall data management process, MDM helps improve the quality of an organization's data by ensuring that identifiers and other key data elements about those entities are accurate and consistent, enterprise-wide.

When done properly, MDM can also streamline data sharing between different business systems and facilitate data processing in IT environments that contain a variety of platforms and applications. In addition, effective master data management helps make the data used in business intelligence (BI) and analytics applications more trustworthy.

Master data management grew out of previously separate methodologies focused on consolidating data for specific entities -- in particular, customer data integration (CDI) and product information management (PIM). MDM brought them together into a single category with a broader focus, although CDI and PIM are still active subcategories.

Importance of master data management

Business operations depend on transaction processing systems, and BI and analytics increasingly drive marketing campaigns, customer engagement efforts, supply chain management and other business processes. But many companies don't have a single view of their customers. Commonly, that's because customer data differs from one system to another. For example, customer records might not be identical in order entry, shipping and customer service systems due to variations in names, addresses and other attributes.

Similar inconsistencies can also occur in product data and other types of information. Such issues cause business problems if critical data can't be accessed or is missed by end users. Master data management programs help avoid that by consolidating data from multiple source systems into a standard format to provide the needed single view of business entities.

In the case of customer data, MDM harmonizes it to create a unified set of master data for use in all applicable systems. That enables organizations to eliminate duplicate customer records with mismatched data, giving operational workers, business executives, data scientists and other users access to comprehensive customer information without having to manually combine different data entries.

What is master data?

Master data is often called a golden record of information in a data domain, which corresponds to the entity that's the subject of the data being mastered. Data domains vary from industry to industry. For example, common ones for manufacturers include customers, products, suppliers and materials. Banks might focus on customers, accounts and products, the latter meaning financial ones. Patients, equipment and supplies are among the applicable data domains in healthcare organizations. For insurers, they include members, products and claims, plus providers in the case of medical insurers.

Employees, locations and assets are examples of data domains that can be applied across industries as part of master data management initiatives. Another is reference data, which consists of codes for countries and states, currencies, order status entries and other generic values.

Master data doesn't include transactions processed in the various data domains. Instead, it essentially functions as a master file of dates, names, addresses, customer IDs, item numbers, product specifications and other attributes that are used in transaction processing systems and analytics applications. As a result, well-managed master data is also frequently described as a single source of truth -- or, alternatively, a single version of the truth -- about an organization's data, as well as data from external sources that's ingested into corporate systems to augment internal data sets.

MDM architecture

There are two forms of master data management that can be implemented separately or in tandem: analytical MDM, which aims to feed consistent master data to data warehouses and other analytics systems, and operational MDM, which focuses on the master data in core business systems. Both provide a systematic approach to managing master data, typically enabled by the deployment of a centralized MDM hub where the master data is stored and maintained.

However, there are different ways to architect MDM systems, depending on how organizations want to structure their master data management programs and the connections between the MDM hub and source systems. The primary MDM architectural styles that have been identified by data management consultants and MDM software vendors include the following:

- A registry architecture. This style creates a unified index of master data for analytical uses without changing any of the data in individual source systems. Regarded as the most lightweight MDM architecture, it uses data cleansing and matching tools to identify duplicate data entries in different systems and cross-reference them in the registry.

- A consolidation approach. In this style, sets of master data are pulled from various source systems and consolidated in the MDM hub, creating a centralized repository of consistent master data primarily used in BI, analytics and enterprise reporting. However, operational systems continue to use their own master data for transaction processing.

- A coexistence style. Likewise, this style creates a consolidated set of master data in the MDM hub. In this case, though, changes to the master data in individual source systems are updated in the hub and can then be propagated to other systems so they all use the same data, offering a balance between system-level management and centralized governance of master data.

- A transaction architecture. Also known as a centralized architecture, this approach moves all management and updating of master data to the MDM hub, which publishes data changes to each source system. It's the most intrusive style of MDM from an organizational standpoint because of the shift to full centralization, but it provides the highest level of enterprise control.

In addition to a master data storage repository and software to automate the interactions with source systems, a master data management framework typically includes change management, workflow and collaboration tools. Another available technology option is using data virtualization software to augment MDM hubs, which creates unified views of data from different systems virtually, without requiring any physical data movement.

Benefits of master data management

The following are some of the primary business benefits that MDM provides:

- Increased data consistency, both for operational and analytical uses. A uniform set of master data on customers and other entities can help reduce operational errors and optimize business processes -- for example, by ensuring that customer service representatives see all of the data on individual customers and that the shipping department has the correct addresses for deliveries. It can also boost the accuracy of BI and analytics applications, hopefully resulting in better strategic planning and business decision-making.

- Improved regulatory compliance. MDM initiatives can also aid efforts to comply with regulatory mandates, such as the Sarbanes-Oxley Act and the Health Insurance Portability and Accountability Act -- better known as HIPAA -- in the U.S. New data privacy and protection laws -- most notably, the European Union's General Data Protection Regulation (GDPR) and the California Consumer Privacy Act -- have become another driver for master data management, which can help companies identify all of the personal data they collect about people.

- More effective data governance. MDM also dovetails with data governance programs, which create standards, policies and procedures on data usage overall in organizations. MDM can help improve the data quality metrics typically used to demonstrate the business value of data governance efforts. Also, MDM systems can be configured to give federated views of master data to data stewards, who are charged with overseeing data sets and making sure that end users adhere to data governance policies.

MDM best practices

Best practices for managing MDM programs include the following actions:

- Include business stakeholders in the MDM process. While MDM is aided by technology, it's as much an organizational -- or people -- process as it is a technical one. As a result, it's important to involve business executives and users in MDM programs, especially if master data will be managed centrally and updated in operational systems by an MDM hub. The various data and business process owners in an organization should have a say in decisions on how master data is structured and policies for implementing changes to it in systems. Ideally, that starts at the very beginning, when the scope of an MDM initiative is defined.

- Document the potential business benefits upfront. Connecting MDM's expected benefits on the use of data assets to corporate strategies and business goals is generally a must to get management buy-in for a program, which is needed both to secure funding for the work and to overcome potential resistance internally.

- Build end-user training and education into the program. Business units and analytics teams should get training on the MDM process and the purposes behind it before a program starts.

- Plan for the long term and structure the program accordingly. MDM must be addressed as an ongoing initiative rather than a one-off project, as frequent updates to master data records are commonly needed. Some organizations have created MDM centers of excellence to establish and then manage their programs to help avoid roadblocks on the efforts to incorporate common sets of master data into business systems.

Challenges of master data management

Despite the benefits it offers, MDM can be a difficult undertaking. These are some of the common challenges it presents to organizations:

- Complexity. The potential benefits of master data management increase as the number and diversity of systems and applications in an organization expand. For this reason, MDM is more likely to be of value to large enterprises than small and medium-sized businesses. However, the complexity of enterprise MDM programs has limited their adoption even in large companies.

- Disagreements on enterprise data standards. One of the biggest hurdles is getting different business units and departments to agree on common master data standards. MDM efforts can lose momentum and get bogged down if users argue about how data is formatted in their separate systems.

- Project scoping issues. Another often-mentioned obstacle to successful MDM implementations is project scoping. The efforts can become unwieldy if the scope of the planned work gets out of control or if the implementation plan doesn't properly stage the required steps.

- Incorporating acquired companies into MDM programs. When companies merge, MDM can help streamline data integration, reduce incompatibilities and optimize operational efficiency in the newly combined organization. But the challenge of reaching consensus on master data among business units can be even greater after a merger or acquisition.

- Dealing with sets of big data. The growing use of big data systems in organizations can also complicate the MDM process by adding new forms of unstructured and semistructured data stored in a variety of platforms, including Hadoop clusters, other types of data lake systems and newer data lakehouse environments.

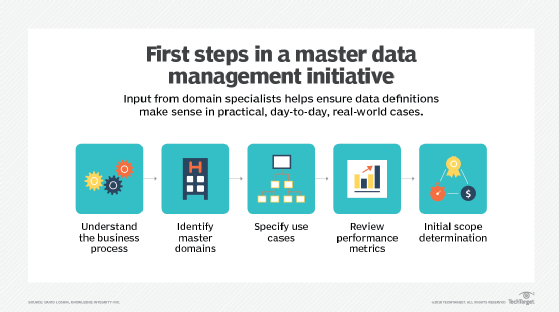

Key steps in the MDM process

MDM initiatives typically are long projects that include various phases and tasks, including the following key steps:

- Identify all relevant data sources for a particular domain and the business owners of each data source.

- Work with the various business stakeholders to agree on common formats for the master data across all the systems.

- Create a master data model that formalizes the structure of the master data records and maps them to the various source systems.

- Also with the stakeholders, decide what type of MDM architecture to deploy based on business needs and planned applications.

- Deploy any new systems or software tools that are needed to support the MDM process.

- Cleanse, consolidate and standardize data to fit the master data model, using data quality management and data transformation techniques.

- Match duplicate data records from multiple systems and merge them into single entries as part of the final master data list.

- Modify source systems as needed so they can access and use the master data during data processing operations.

Software that can be used to automate master data management tasks is available from various vendors, including MDM specialists and larger providers that offer a full line of data management tools. MDM software typically includes features for data cleansing, data matching and merging, workflow management, data modeling and other functions. In addition, it often incorporates data stewardship and data governance features or is integrated with companion tools that provide them.

Key roles and participants in an MDM initiative

Because of their complexity and their broad impact on business operations, MDM programs should involve a wide range of people in an organization. The level of involvement varies depending on the role: Some data management professionals might work full-time on MDM, while others devote part of their time to it, and business stakeholders usually take part on an occasional, though regular, basis.

These are some of the key positions and participants in the MDM process:

- MDM manager. This person oversees the planning, development and implementation of an MDM program. The job title could also be director of MDM, MDM program lead or another variation. In organizations with aligned MDM and data governance programs, a single manager might be put in charge of both initiatives.

- Master data specialist. As the title indicates, this is a technical role that focuses on the creation and maintenance of master data. Duties typically include upfront data cleansing, matching and merging, plus troubleshooting of data quality issues on an ongoing basis. In some organizations, the role is master data analyst or MDM analyst.

- Data stewards. As part of overseeing data sets in specific domains, data stewards often are involved in MDM programs. For example, they can handle some of the data management and maintenance tasks or work with master data specialists on those functions. They can also ensure that business units comply with internal master data standards.

- Other data management professionals. Various data management team members can play a part in MDM programs, too. That includes data architects and data modelers, as well as data quality analysts who help with data cleansing and ETL developers who create extract, transform and load jobs to pull together master data from different source systems.

- Executive sponsor. MDM programs are big and often expensive initiatives, and they can lead to internal infighting over master data standards and face resistance from business units. As a result, they commonly need an executive sponsor who can ensure that a program gets required funding, help to resolve conflicts and promote adoption.

- Business stakeholders. Business executives whose operations are affected by an MDM program need to be involved in master data decision-making activities. Alternatively, they can designate subject matter experts to represent their business units. In many cases, participating stakeholders are organized into a steering committee that meets regularly. Organizations with an existing data governance council might instead use it to make MDM-related decisions.