data quality

What is data quality?

Data quality is a measure of a data set's condition based on factors such as accuracy, completeness, consistency, reliability and validity. Measuring data quality can help organizations identify errors and inconsistencies in their data and assess whether the data fits its intended purpose.

Organizations have grown increasingly concerned about data quality as they've come to recognize the important role that data plays in business operations and advanced analytics, which are used to drive business decisions. Data quality management is a core component of an organization's overall data governance strategy.

Data governance ensures that the data is properly stored, managed, protected, and used consistently throughout an organization.

Why is data quality so important?

Low-quality data can have significant business consequences for an organization. Bad data is often the culprit behind operational snafus, inaccurate analytics and ill-conceived business strategies. For example, it can potentially cause any of the following problems:

- Shipping products to the wrong customer addresses.

- Missing sales opportunities because of erroneous or incomplete customer records.

- Being fined for improper financial or regulatory compliance reporting.

In 2021, consulting firm Gartner stated that bad data quality costs organizations an average of $12.9 million per year. Another figure that's still often cited comes from IBM, which estimated that data quality issues in the U.S. cost $3.1 trillion in 2016. And in an article he wrote for the MIT Sloan Management Review in 2017, data quality consultant Thomas Redman estimated that correcting data errors and dealing with the business problems caused by bad data costs companies an average of 15% to 25% of their annual revenue.

In addition, a lack of trust in data on the part of corporate executives and business managers is commonly cited among the chief impediments to using business intelligence (BI) and analytics tools to improve decision-making in organizations. At the same time, data volumes are growing at staggering rates, and the data is more diverse than ever. Never has it been more important for an organization to implement an effective data quality management strategy.

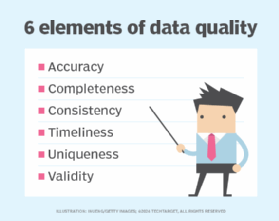

What are the six elements of data quality?

Low-quality data can lead to transaction processing problems in operational systems and faulty results in analytics applications. Such data needs to be identified, documented and fixed to ensure that business executives, data analysts and other business users are working with good information. High-quality data should possess the following six characteristics:

- Accuracy. The data correctly represents the entities or events it is supposed to represent, and the data comes from sources that are verifiable and trustworthy.

- Consistency. The data is uniform across systems and data sets, and there are no conflicts between the same data values in different systems or data sets.

- Validity. The data conforms to defined business rules and parameters, which ensure that the data is properly structured and contains the values it should.

- Completeness. The data includes all the values and types of data it is expected to contain, including any metadata that should accompany the data sets.

- Timeliness. The data is current (relative to its specific requirements) and is available to use when it's needed.

- Uniqueness. The data does not contain duplicate records within a single data set, and every record can be uniquely identified.

A data set that meets all of these measures is much more reliable and trustworthy than one that does not. However, these are not necessarily the only standards that organizations use to assess their data sets. For example, they might also take into account qualities such as appropriateness, credibility, relevance, reliability or usability. The goal is to ensure that the data fits its intended purpose and that it can be trusted.

Benefits of good data quality

From a financial standpoint, maintaining high data quality enables organizations to reduce the costs associated with identifying and fixing bad data when a data-related issue arises. Maintaining data quality also helps to avoid operational errors and business process breakdowns, which can increase operating expenses and reduce revenues.

In addition, good data quality increases the accuracy of analytics, including those that rely on artificial intelligence (AI) technologies. This can lead to better business decisions, which in turn can lead to improved internal processes, competitive advantages and higher sales. Good-quality data also improves the information available through BI dashboards and other analytics tools. If business users consider the analytics to be trustworthy, they're more likely to rely on them instead of basing decisions on gut feelings or simple spreadsheets.

Effective data quality management also frees up data teams to focus on more productive tasks, rather than on troubleshooting issues and cleaning up the data when problems occur. For example, they can spend more time helping business users and data analysts take advantage of the available data while promoting data quality best practices in business operations.

Assessing data quality

As a first step toward assessing data quality, organizations typically inventory their data assets and conduct baseline studies to measure the relative accuracy, uniqueness and validity of each data set. The established baselines can then be compared against the data on an ongoing basis to help ensure that existing concerns are being addressed and to identify new data quality issues.

Various methodologies have been developed for assessing data quality. For example, data managers at UnitedHealth Group's Optum healthcare services subsidiary created the Data Quality Assessment Framework (DQAF) in 2009 to formalize a method for assessing its data quality. The DQAF provides guidelines for measuring data quality based on four dimensions: completeness, timeliness, validity and consistency. Optum publicized details about the framework as a possible model for other organizations.

The International Monetary Fund (IMF), which oversees the global monetary system and lends money to economically troubled nations, has also specified an assessment methodology with the same name as the Optum one. Its framework focuses on accuracy, reliability, consistency and other data quality attributes in the statistical data that member countries must submit to the IMF. In addition, the U.S. government's Office of the National Coordinator for Health Information Technology has detailed a data quality framework for patient demographic data collected by healthcare organizations.

Addressing data quality issues

In many organizations, analysts, engineers and data quality managers are the primary people responsible for fixing data errors and addressing other data quality issues. They're collectively tasked with finding and cleansing bad data in databases and other data repositories, often with assistance and support from other data management professionals, including data stewards and data governance program managers.

A data quality initiative might also involve business users, data scientists and other analysts in the process to help reduce the number of data quality issues. Participation might be facilitated, at least in part, through the organization's data governance program. In addition, many companies provide training to end users on data quality best practices. A common mantra among data managers is that everyone in an organization is responsible for data quality.

To address data quality issues, a data management team often creates a set of data quality rules based on business requirements for both operational and analytics data. The rules define the required data quality levels and how data should be cleansed and standardized to ensure accuracy, consistency and other data quality attributes.

After the rules are in place, a data management team typically conducts a data quality assessment, documenting errors and other problems -- a procedure that can be repeated at regular intervals to ensure the highest data quality possible.

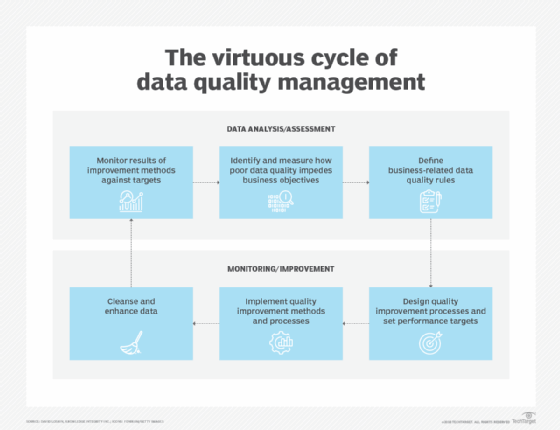

Not all data management teams approach data quality in the same way. For example, data management consultant David Loshin outlined a data quality management cycle that begins with identifying and measuring the effect that bad data has on business operations. The team then defines data quality rules and sets performance targets for improving data quality metrics.

Next, the team designs and implements specific data quality improvement processes. The processes include data cleansing (data scrubbing), fixing data errors, and enhancing data sets by adding missing values or providing more up-to-date information or additional records.

The results are then monitored and measured against the performance targets. Any remaining deficiencies in data quality serve as a starting point for the next round of planned improvements. Such a cycle is intended to ensure that efforts to improve overall data quality continue after individual projects are completed.

Data quality management tools and techniques

Organizations often turn to data quality management tools to help streamline their efforts. These tools can match records, delete duplicates, validate new data, establish remediation policies and identify personal data in data sets. Some products can also perform data profiling, which examines, analyzes and summarizes data sets.

Many of these tools now include augmented data quality functions that automate tasks and procedures, often through the use of machine learning and other AI technologies. Most tools also include centralized consoles or portals for performing management tasks. For example, users might be able to create data handling rules, identify data relationships or automate data transformations through the central interface.

Data quality managers and data stewards might also use collaboration and workflow tools that provide shared views of the organization's data repositories and enable them to oversee specific data sets. These and other data management tools might be selected as part of an organization's larger data governance strategy. The tools can also play a role in the organization's master data management (MDM) initiatives, which establish registries of master data on customers, products, supply chains, and other data domains.

Emerging data quality challenges

For many years, data quality efforts centered on structured data stored in relational databases, which were the dominant technology for managing data. But data quality concerns expanded as cloud computing and big data initiatives became more widespread. In addition to structured data, data managers must now also take into account unstructured data and semi-structured data, such as text files, internet clickstream records, sensor data, and network, system and application logs.

A number of other factors also now play a role in data quality, adding to the complexity of managing data:

- Many of today's organizations work with both on-premises and cloud systems.

- A growing number of organizations are incorporating machine learning and other AI technologies into their operations and products.

- Many organizations have implemented real-time data streaming platforms that continuously funnel large volumes of data into corporate systems.

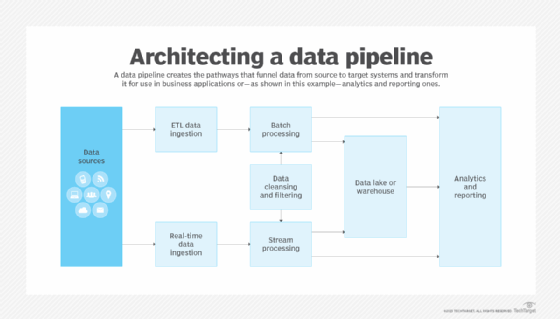

- Data scientists are implementing complex data pipelines to support their research and advanced analytics.

Data quality concerns are also growing due to the implementation of data privacy and protection laws such as the European Union's General Data Protection Regulation (GDPR) and the California Consumer Privacy Act (CCPA). Both measures give people the right to access the personal data that companies collect about them, which means organizations must be able to find all of the records on an individual in their systems without missing any because of inaccurate or inconsistent data.

Data quality vs. data integrity

The terms data quality and data integrity are sometimes used interchangeably, although they have different meanings. At the same time, some people treat data integrity as a facet of data quality or data quality as a component of data integrity. Others consider both data quality and data integrity to be part of a larger data governance effort, while still others consider data integrity to be a broader concept that combines data quality, data governance and data protection into a unified effort for addressing data accuracy, consistency and security.

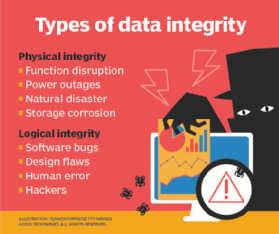

From a broader perspective, data integrity focuses on the data's logical and physical validity. Logical integrity includes data quality measures and database attributes such as referential integrity, which ensures that related data elements in different database tables are valid.

Physical integrity is concerned with access controls and other security measures designed to prevent data from being modified or corrupted by unauthorized users. It is also concerned with protections such as backups and disaster recovery. In contrast, data quality is focused more on the data's ability to serve its specified purpose.

Check out 11 features to look for in data quality management tools. See how data governance and data quality work together and explore five steps that improve data quality assurance plans. Learn about four data quality challenges that hinder data operations and check out eight proactive steps to improve data quality. Read how data quality shapes machine learning and AI outcomes.